Run an expert MLP.

405{

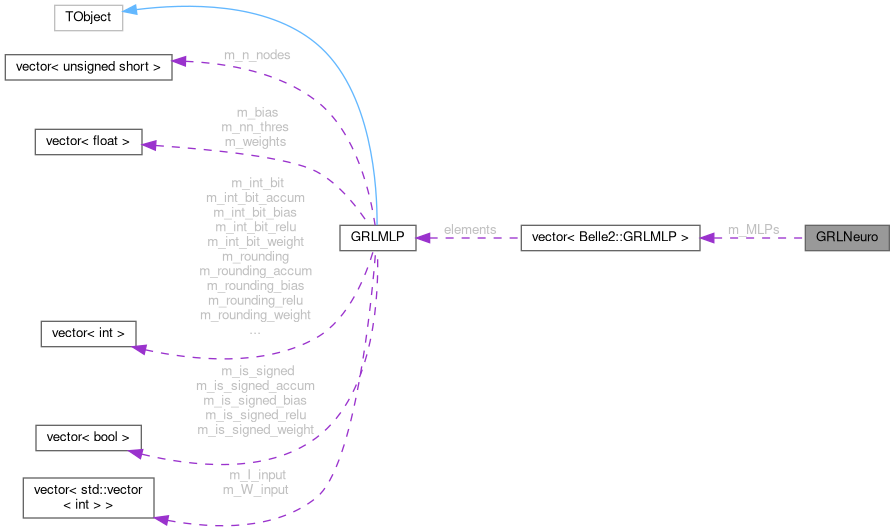

406 const GRLMLP& expert =

m_MLPs[isector];

407 vector<float> weights = expert.get_weights();

408 vector<float> bias = expert.get_bias();

409 vector<int> total_bit_bias = expert.get_total_bit_bias();

410 vector<int> int_bit_bias = expert.get_int_bit_bias();

411 vector<bool> is_signed_bias = expert.get_is_signed_bias();

412 vector<int> rounding_bias = expert.get_rounding_bias();

413 vector<int> saturation_bias = expert.get_saturation_bias();

414 vector<int> total_bit_accum = expert.get_total_bit_accum();

415 vector<int> int_bit_accum = expert.get_int_bit_accum();

416 vector<bool> is_signed_accum = expert.get_is_signed_accum();

417 vector<int> rounding_accum = expert.get_rounding_accum();

418 vector<int> saturation_accum = expert.get_saturation_accum();

419 vector<int> total_bit_weight = expert.get_total_bit_weight();

420 vector<int> int_bit_weight = expert.get_int_bit_weight();

421 vector<bool> is_signed_weight = expert.get_is_signed_weight();

422 vector<int> rounding_weight = expert.get_rounding_weight();

423 vector<int> saturation_weight = expert.get_saturation_weight();

424 vector<int> total_bit_relu = expert.get_total_bit_relu();

425 vector<int> int_bit_relu = expert.get_int_bit_relu();

426 vector<bool> is_signed_relu = expert.get_is_signed_relu();

427 vector<int> rounding_relu = expert.get_rounding_relu();

428 vector<int> saturation_relu = expert.get_saturation_relu();

429 vector<int> total_bit = expert.get_total_bit();

430 vector<int> int_bit = expert.get_int_bit();

431 vector<bool> is_signed = expert.get_is_signed();

432 vector<int> rounding = expert.get_rounding();

433 vector<int> saturation = expert.get_saturation();

434 vector<vector<int>> W_input = expert.get_W_input();

435 vector<vector<int>> I_input = expert.get_I_input();

436

437

438

439 vector<float> layerinput = input;

440

441

442 for (size_t i = 0; i < layerinput.size(); ++i) {

443

444 int W_arr[24] = { 12, 12, 11, 11, 8, 8, 7, 7, 5, 6, 6, 6, 8, 8, 6, 5, 4, 5, 7, 7, 6, 4, 5, 5 };

445 int I_arr[24] = { 12, 12, 11, 11, 10, 9, 7, 7, 7, 7, 7, 7, 8, 8, 9, 9, 9, 9, 9, 9, 9, 9, 9, 9 };

446 if (i != 23) {

447 layerinput[i] = sim_input_layer_t(layerinput[i]);

448 layerinput[i] = sim_ap_dense_0_iq(layerinput[i], W_arr[i], I_arr[i]);

449

450 } else layerinput[i] = 0;

451 }

452

453

454 vector<float> layeroutput = {};

455 unsigned num_layers = expert.get_number_of_layers();

456

457

458 vector<float> final_fixed = {};

459

460 unsigned num_total_neurons = 0;

461 unsigned iw = 0;

462 for (unsigned i_layer = 0; i_layer < num_layers - 1; i_layer++) {

463

464 unsigned num_neurons = expert.get_number_of_nodes_layer(i_layer + 1);

465 layeroutput.clear();

466 layeroutput.assign(num_neurons, 0.);

467 layeroutput.shrink_to_fit();

468

469 for (unsigned io = 0; io < num_neurons; ++io) {

470 float bias_raw = bias[io + num_total_neurons];

471 float bias_fixed = sim_fixed(bias_raw, total_bit_bias[i_layer], int_bit_bias[i_layer], is_signed_bias[i_layer],

472 rounding_bias[i_layer], saturation_bias[i_layer]);

473 float bias_contrib = sim_fixed(bias_fixed, total_bit_accum[i_layer], int_bit_accum[i_layer], is_signed_accum[i_layer],

474 rounding_accum[i_layer], saturation_accum[i_layer]);

475 layeroutput[io] = bias_contrib;

476

477 }

478 num_total_neurons += num_neurons;

479

480

481 unsigned num_inputs = layerinput.size();

482 for (unsigned ii = 0; ii < num_inputs; ++ii) {

483 float input_val = layerinput[ii];

484 for (unsigned io = 0; io < num_neurons; ++io) {

485 float weight_raw = weights[iw];

486 float weight_fixed = sim_fixed(weight_raw, total_bit_weight[i_layer], int_bit_weight[i_layer], is_signed_weight[i_layer],

487 rounding_weight[i_layer], saturation_weight[i_layer]);

488 float product = input_val * weight_fixed;

489 float contrib = sim_fixed(product, total_bit_accum[i_layer], int_bit_accum[i_layer], is_signed_accum[i_layer],

490 rounding_accum[i_layer], saturation_accum[i_layer]);

491 layeroutput[io] += contrib;

492 ++iw;

493 }

494 }

495

496 if (i_layer < num_layers - 2) {

497

498 for (unsigned io = 0; io < num_neurons; ++io) {

499 float fixed_val = sim_fixed(layeroutput[io], total_bit[i_layer], int_bit[i_layer], is_signed[i_layer], rounding[i_layer],

500 saturation[i_layer]);

501 float relu_val = (fixed_val > 0) ? fixed_val : 0;

502 layeroutput[io] = sim_fixed(relu_val, total_bit_relu[i_layer], int_bit_relu[i_layer], is_signed_relu[i_layer],

503 rounding_relu[i_layer], saturation_relu[i_layer]);

504 }

505

506

507

508 layerinput.clear();

509 layerinput.assign(num_neurons, 0);

510 layerinput.shrink_to_fit();

511

512 for (unsigned i = 0; i < num_neurons; ++i) {

513

514 layerinput[i] = sim_ap_fixed(layeroutput[i], W_input[i_layer][i], I_input[i_layer][i], false, 1, 4, 0);

515

516 }

517 if (i_layer == 0) {}

518 else if (i_layer == 1) {

519

520 layerinput[1] = 0;

521 layerinput[10] = 0;

522 layerinput[18] = 0;

523

524 } else if (i_layer == 2) {

525 layerinput[2] = 0;

526 layerinput[16] = 0;

527

528 }

529

530

531 } else {

532

533

534 unsigned num_final_fixed = layeroutput.size();

535 for (unsigned io = 0; io < num_final_fixed; ++io) {

536 final_fixed.push_back(sim_result_t(layeroutput[io]));

537 }

538

539 }

540 }

541 return final_fixed;

542}