|

Belle II Software development

|

|

Belle II Software development

|

Classes | |

| class | LSFResult |

Public Member Functions | |

| __init__ (self, *, backend_args=None) | |

| can_submit (self, njobs=1) | |

| bjobs (cls, output_fields=None, job_id="", username="", queue="") | |

| bqueues (cls, output_fields=None, queues=None) | |

| submit (self, job, check_can_submit=True, jobs_per_check=100) | |

| get_batch_submit_script_path (self, job) | |

| get_submit_script_path (self, job) | |

Public Attributes | |

| int | global_job_limit = self.default_global_job_limit |

| The active job limit. | |

| int | sleep_between_submission_checks = self.default_sleep_between_submission_checks |

| Seconds we wait before checking if we can submit a list of jobs. | |

| dict | backend_args = {**self.default_backend_args, **backend_args} |

| The backend args that will be applied to jobs unless the job specifies them itself. | |

Static Public Attributes | |

| str | cmd_wkdir = "#BSUB -cwd" |

| Working directory directive. | |

| str | cmd_stdout = "#BSUB -o" |

| stdout file directive | |

| str | cmd_stderr = "#BSUB -e" |

| stderr file directive | |

| str | cmd_queue = "#BSUB -q" |

| Queue directive. | |

| str | cmd_name = "#BSUB -J" |

| Job name directive. | |

| list | submission_cmds = [] |

| Shell command to submit a script, should be implemented in the derived class. | |

| int | default_global_job_limit = 1000 |

| Default global limit on the total number of submitted/running jobs that the user can have. | |

| int | default_sleep_between_submission_checks = 30 |

| Default time betweeon re-checking if the active jobs is below the global job limit. | |

| str | submit_script = "submit.sh" |

| Default submission script name. | |

| str | exit_code_file = "__BACKEND_CMD_EXIT_STATUS__" |

| Default exit code file name. | |

| dict | default_backend_args = {} |

| Default backend_args. | |

Protected Member Functions | |

| _add_batch_directives (self, job, batch_file) | |

| _create_cmd (self, script_path) | |

| _submit_to_batch (cls, cmd) | |

| _create_parent_job_result (cls, parent) | |

| _create_job_result (cls, job, batch_output) | |

| _make_submit_file (self, job, submit_file_path) | |

| _add_wrapper_script_setup (self, job, batch_file) | |

| _add_wrapper_script_teardown (self, job, batch_file) | |

Static Protected Member Functions | |

| _add_setup (job, batch_file) | |

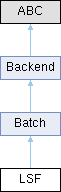

Backend for submitting calibration processes to a qsub batch system.

Definition at line 1625 of file backends.py.

| __init__ | ( | self, | |

| * | , | ||

| backend_args = None ) |

Definition at line 1646 of file backends.py.

|

protected |

Adds LSF BSUB directives for the job to a script.

Reimplemented from Batch.

Definition at line 1651 of file backends.py.

|

staticprotectedinherited |

Adds setup lines to the shell script file.

Definition at line 807 of file backends.py.

|

protectedinherited |

Adds lines to the submitted script that help with job monitoring/setup. Mostly here so that we can insert `trap` statements for Ctrl-C situations.

Definition at line 814 of file backends.py.

|

protectedinherited |

Adds lines to the submitted script that help with job monitoring/teardown. Mostly here so that we can insert an exit code of the job cmd being written out to a file. Which means that we can know if the command was successful or not even if the backend server/monitoring database purges the data about our job i.e. If PBS removes job information too quickly we may never know if a job succeeded or failed without some kind of exit file.

Definition at line 839 of file backends.py.

|

protected |

Reimplemented from Batch.

Definition at line 1666 of file backends.py.

|

protected |

Reimplemented from Batch.

Definition at line 1782 of file backends.py.

|

protected |

We want to be able to call `ready()` on the top level `Job.result`. So this method needs to exist so that a Job.result object actually exists. It will be mostly empty and simply updates subjob statuses and allows the use of ready().

Reimplemented from Backend.

Definition at line 1778 of file backends.py.

|

protectedinherited |

Useful for the HTCondor backend where a submit is needed instead of batch directives pasted directly into the submission script. It should be overwritten if needed.

Reimplemented in HTCondor.

Definition at line 1180 of file backends.py.

|

protected |

Do the actual batch submission command and collect the output to find out the job id for later monitoring.

Reimplemented from Batch.

Definition at line 1675 of file backends.py.

| bjobs | ( | cls, | |

| output_fields = None, | |||

| job_id = "", | |||

| username = "", | |||

| queue = "" ) |

Simplistic interface to the `bjobs` command. lets you request information about all jobs matching the filters

'job_id', 'username', and 'queue'. The result is the JSON dictionary returned by output of the ``-json`` bjobs option.

Parameters:

output_fields (list[str]): A list of bjobs -o fields that you would like information about e.g. ['stat', 'name', 'id']

job_id (str): String representation of the Job ID given by bsub during submission If this argument is given then

the output of this function will be only information about this job. If this argument is not given, then all jobs

matching the other filters will be returned.

username (str): By default bjobs (and this function) return information about only the current user's jobs. By giving

a username you can access the job information of a specific user's jobs. By giving ``username='all'`` you will

receive job information from all known user jobs matching the other filters.

queue (str): Set this argument to receive job information about jobs that are in the given queue and no other.

Returns:

dict: JSON dictionary of the form:

.. code-block:: python

{

"NJOBS":<njobs returned by command>,

"JOBS":[

{

<output field: value>, ...

}, ...

]

}

Definition at line 1818 of file backends.py.

| bqueues | ( | cls, | |

| output_fields = None, | |||

| queues = None ) |

Simplistic interface to the `bqueues` command. lets you request information about all queues matching the filters.

The result is the JSON dictionary returned by output of the ``-json`` bqueues option.

Parameters:

output_fields (list[str]): A list of bqueues -o fields that you would like information about

e.g. the default is ['queue_name' 'status' 'max' 'njobs' 'pend' 'run']

queues (list[str]): Set this argument to receive information about only the queues that are requested and no others.

By default you will receive information about all queues.

Returns:

dict: JSON dictionary of the form:

.. code-block:: python

{

"COMMAND":"bqueues",

"QUEUES":46,

"RECORDS":[

{

"QUEUE_NAME":"b2_beast",

"STATUS":"Open:Active",

"MAX":"200",

"NJOBS":"0",

"PEND":"0",

"RUN":"0"

}, ...

}

Definition at line 1878 of file backends.py.

| can_submit | ( | self, | |

| njobs = 1 ) |

Checks the global number of jobs in LSF right now (submitted or running) for this user.

Returns True if the number is lower that the limit, False if it is higher.

Parameters:

njobs (int): The number of jobs that we want to submit before checking again. Lets us check if we

are sufficiently below the limit in order to (somewhat) safely submit. It is slightly dangerous to

assume that it is safe to submit too many jobs since there might be other processes also submitting jobs.

So njobs really shouldn't be abused when you might be getting close to the limit i.e. keep it <=250

and check again before submitting more.

Reimplemented from Batch.

Definition at line 1794 of file backends.py.

|

inherited |

Construct the Path object of the script file that we will submit using the batch command. For most batch backends this is the same script as the bash script we submit. But for some they require a separate submission file that describes the job. To implement that you can implement this function in the Backend class.

Reimplemented in HTCondor.

Definition at line 1336 of file backends.py.

|

inherited |

Construct the Path object of the bash script file that we will submit. It will contain the actual job command, wrapper commands, setup commands, and any batch directives

Definition at line 860 of file backends.py.

|

inherited |

Reimplemented from Backend.

Definition at line 1205 of file backends.py.

|

inherited |

The backend args that will be applied to jobs unless the job specifies them itself.

Definition at line 797 of file backends.py.

|

static |

Job name directive.

Definition at line 1638 of file backends.py.

|

static |

Queue directive.

Definition at line 1636 of file backends.py.

|

static |

stderr file directive

Definition at line 1634 of file backends.py.

|

static |

stdout file directive

Definition at line 1632 of file backends.py.

|

static |

Working directory directive.

Definition at line 1630 of file backends.py.

|

staticinherited |

Default backend_args.

Definition at line 789 of file backends.py.

|

staticinherited |

Default global limit on the total number of submitted/running jobs that the user can have.

This limit will not affect the total number of jobs that are eventually submitted. But the jobs won't actually be submitted until this limit can be respected i.e. until the number of total jobs in the Batch system goes down. Since we actually submit in chunks of N jobs, before checking this limit value again, this value needs to be a little lower than the real batch system limit. Otherwise you could accidentally go over during the N job submission if other processes are checking and submitting concurrently. This is quite common for the first submission of jobs from parallel calibrations.

Note that if there are other jobs already submitted for your account, then these will count towards this limit.

Definition at line 1156 of file backends.py.

|

staticinherited |

Default time betweeon re-checking if the active jobs is below the global job limit.

Definition at line 1158 of file backends.py.

|

staticinherited |

Default exit code file name.

Definition at line 787 of file backends.py.

|

inherited |

The active job limit.

This is 'global' because we want to prevent us accidentally submitting too many jobs from all current and previous submission scripts.

Definition at line 1167 of file backends.py.

|

inherited |

Seconds we wait before checking if we can submit a list of jobs.

Only relevant once we hit the global limit of active jobs, which is a lot usually.

Definition at line 1170 of file backends.py.

|

staticinherited |

Shell command to submit a script, should be implemented in the derived class.

Definition at line 1143 of file backends.py.

|

staticinherited |

Default submission script name.

Definition at line 785 of file backends.py.